CN-Series

Deploy the CN-Series Firewall as a DaemonSet

Table of Contents

Expand All

|

Collapse All

CN-Series Firewall Docs

-

-

-

- Deployment Modes

- In-Cloud and On-Prem

-

-

-

Deploy the CN-Series Firewall as a DaemonSet

Use Kubernetes tools such as kubectl or Helm to deploy

and manage your Kubernetes clusters, apps, and firewall services.

| Where Can I Use This? | What Do I Need? |

|---|---|

|

|

Complete the following procedure to deploy

the CN-Series firewall as a Daemonset.

Before you begin, ensure

the CN-Series YAML file version is compatible with the PAN-OS version.

- PAN-OS 10.1.2 or later requires YAML 2.0.2

- PAN-OS 10.1.0 and 10.1.1 require YAML 2.0.0 or 2.0.1

- Set up your Kubernetes cluster.

- Verify that the cluster has adequate resources. Make sure that cluster has the CN-Series Prerequisites resources to support the firewall:kubectl get nodeskubectl describe node <node-name>View the information under the Capacity heading in the command output to see the CPU and memory available on the specified node.The CPU, memory and disk storage allocation will depend on your needs. See CN-Series Performance and Scaling.Ensure you have the following information:

- Collect the Endpoint IP address for setting up the API server on Panorama. Panorama uses this IP address to connect to your Kubernetes cluster.

- Collect the template stack name, device group name, Panorama IP address, and optionally the Log Collector Group Name from Panorama.

- Collect the authorization code and auto-registration PIN ID and value.

- The location of the container image repository to which you downloaded the images.

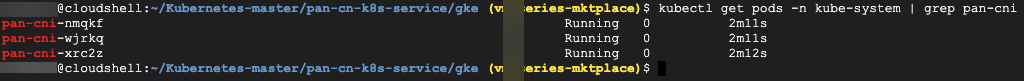

(optional) If you configured a custom certificate in the Kubernetes plugin for Panorama, you must create the cert secret by executing the following command. Do not change the file name from ca.crt. The volume for custom certificates in pan-cn-mgmt.yaml and pan-cn-ngfw.yaml is optional.kubectl -n kube-system create secret generic custom-ca --from-file=ca.crtEdit the YAML files to provide the details required to deploy the CN-Series firewalls.You need to replace the image path in the YAML files to include the path to your private registry and provide the required parameters. See Editable Parameters in CN-Series Deployment YAML Files for details.Deploy the CNI DaemonSet.The CNI container is deployed as a DaemonSet (one pod per node) and it creates two interfaces on the CN-NGFW pod for each application deployed on the node. When you use the kubectl commands to run the pan-cni YAML files, it becomes a part of the service chain on each node.- Verify that you have created the service account using the pan-cni-serviceaccount.yaml.Use Kubectl to run the pan-cni-configmap.yaml.kubectl apply -f pan-cni-configmap.yamlUse Kubectl to run the pan-cni.yaml.kubectl apply -f pan-cni.yamlVerify that you have modified the pan-cni-configmap and pan-cni YAML files.Run the following command and verify that your output is similar to the following example.kubectl get pods -n kube-system | grep pan-cni

![]() (CN-Series for EKS on AWS Outpost only) Update the storage class. To support the CN-Series deployed on AWS Outpost, you must use the storage driver aws-ebs-csi-driver, which ensures that Outpost pulls the volumes from the Outpost during dynamic Persistent Volume (PV) creation.

(CN-Series for EKS on AWS Outpost only) Update the storage class. To support the CN-Series deployed on AWS Outpost, you must use the storage driver aws-ebs-csi-driver, which ensures that Outpost pulls the volumes from the Outpost during dynamic Persistent Volume (PV) creation.- Apply the following yaml.kubectl apply -k "github.com/kubernetes-sigs/aws-ebs-csi-driver/deploy/kubernetes/overlays/stable/?ref=release-0.10"Verify that the ebs-sc controller is running.kubectl -n kube-system get podsUpdate pan-cn-storage-class.yaml to match the example below.apiVersion: v1 kind: StorageClass apiVersion: storage.k8s.io/v1 metadata: name: ebs-sc provisioner: ebs.csi.aws.com volumeBindingMode: WaitForFirstConsumer parameters: type: gp2Add storageClassName: ebs-sc to pan-cn-mgmt.yaml in the locations shown below.volumeClaimTemplates: - metadata: name: panlogs spec: #storageClassName: pan-cn-storage-class //For better disk iops performance for logging accessModes: [ "ReadWriteOnce" ] storageClassName: ebs-sc // resources: requests: storage: 20Gi # change this to 200Gi while using storageClassName for better disk iops - metadata: name: varlogpan spec: #storageClassName: pan-cn-storage-class //For better disk iops performance for dp logs accessModes: [ "ReadWriteOnce" ] storageClassName: ebs-sc resources: requests: storage: 20Gi # change this to 200Gi while using storageClassName for better disk iops - metadata: name: varcores spec: accessModes: [ "ReadWriteOnce" ] storageClassName: ebs-sc resources: requests: storage: 2Gi - metadata: name: panplugincfg spec: accessModes: [ "ReadWriteOnce" ] storageClassName: ebs-sc resources: requests: storage: 1Gi - metadata: name: panconfig spec: accessModes: [ "ReadWriteOnce" ] storageClassName: ebs-sc resources: requests: storage: 8Gi - metadata: name: panplugins spec: accessModes: [ "ReadWriteOnce" ] storageClassName: ebs-sc resources: requests: storage: 200MiDeploy the CN-MGMT StatefulSet.By default, the management plane is deployed as a StatefulSet that provides fault tolerance. Up to 30 firewall CN-NGFW pods can connect to a CN-MGMT StatefulSet.

- (Required for statically provisioned PVs only) Deploy the Persistent Volumes (PVs) for the CN-MGMT StatefulSet.

- Create the directories to match the local volume names defined in the pan-cn-pv-local.yaml.You need six (6) directories on at least 2 worker nodes. Log in to each worker node on which the CN-MGMT StatefulSet will be deployed to create the directories. For example, to create directories named /mnt/pan-local1 to /mnt/pan-local6, use the command:

mkdir -p /mnt/pan-local1 /mnt/pan-local2 /mnt/pan-local3 /mnt/pan-local4 /mnt/pan-local5 /mnt/pan-local6

- Modify pan-cn-pv-local.yaml.Match the hostname under nodeaffinity, and verify that you have modified the directories you created above in spec.local.path then deploy the file to create a new storageclass pan-local-storage and local PVs.

Verify that you have modified the pan-cn-mgmt-configmap and pan-cn-mgmt YAML files.Sample pan-cn-mgmt-configmap from EKS.Session Contents Restored apiVersion: v1 kind: ConfigMap metadata: name: pan-mgmt-config namespace: kube-system data: PAN_SERVICE_NAME: pan-mgmt-svc PAN_MGMT_SECRET: pan-mgmt-secret # Panorama settings PAN_PANORAMA_IP: "x.y.z.a" PAN_DEVICE_GROUP: "dg-1" PAN_TEMPLATE_STACK: "temp-stack-1" PAN_CGNAME: "CG-EKS" # Intended License Bundle type - "CN-X-BASIC", "CN-X-BND1", "CN-X-BND2" # based on the authcode applied on the Panorama K8S plugin" PAN_BUNDLE_TYPE: "CN-X-BND2" #Non-mandatory parameters # Recommended to have same name as the cluster name provided in Panorama Kubernetes plugin - helps with easier identification of pods if managing multiple clusters with same Panorama #CLUSTER_NAME: "Cluster-name" #PAN_PANORAMA_IP2: "passive-secondary-ip" # Comment out to use CERTs otherwise bypass encrypted connection to etcd in pan-mgmt. # Not using CERTs for etcd due to EKS bug ETCD_CERT_BYPASS: "" # No value needed # Comment out to use CERTs otherwise PSK for IPSec between pan-mgmt and pan-ngfw # IPSEC_CERT_BYPASS: "" # No values neededSample pan-cn-mgmt.yamlinitContainers: - name: pan-mgmt-init image: <your-private-registry-image-path>containers: - name: pan-mgmt image: <your-private-registry-image-path> terminationMessagePolicy: FallbackToLogsOnErrorUse Kubectl to run the yaml files.kubectl apply -f pan-cn-mgmt-configmap.yamlkubectl apply -f pan-cn-mgmt-slot-crd.yamlkubectl apply -f pan-cn-mgmt-slot-cr.yamlkubectl apply -f pan-cn-mgmt-secret.yamlkubectl apply -f pan-cn-mgmt.yamlYou must run the pan-mgmt-serviceaccount.yaml, only if you had not previously completed the Create Service Accounts for Cluster Authentication.Verify that the CN-MGMT pods are up.It takes about 5-6 minutes.Use kubectl get pods -l app=pan-mgmt -n kube-systemNAME READY STATUS RESTARTS AGEpan-mgmt-sts-0 1/1 Running 0 27hpan-mgmt-sts-1 1/1 Running 0 27hDeploy the CN-NGFW pods.By default the firewall dataplane CN-NGFW pod is deployed as a DaemonSet. An instance of the CN-NFGW pod can secure traffic for up to 30 application Pods on a node.- Verify that you have modified the YAML files as detailed in PAN-CN-NGFW-CONFIGMAP and PAN-CN-NGFW.containers: - name: pan-ngfw-container image: <your-private-registry-image-path>Use Kubectl apply to run the pan-cn-ngfw-configmap.yaml.kubectl apply -f pan-cn-ngfw-configmap.yamlUse Kubectl apply to run the pan-cn-ngfw.yaml.kubectl apply -f pan-cn-ngfw.yamlVerify that all the CN-NGFW Pods are running. (one per node in your cluster)This is a sample output from a 4-node on-premises cluster.kubectl get pods -n kube-system -l app=pan-ngfw -o wideNAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATESpan-ngfw-ds-8g5xb 1/1 Running 0 27h 10.233.71.113 rk-k8-node-1 <none> <none>pan-ngfw-ds-qsrm6 1/1 Running 0 27h 10.233.115.189 rk-k8-vm-worker-1 <none> <none>pan-ngfw-ds-vqk7z 1/1 Running 0 27h 10.233.118.208 rk-k8-vm-worker-3 <none> <none>pan-ngfw-ds-zncqg 1/1 Running 0 27h 10.233.91.210 rk-k8-vm-worker-2 <none> <none>Verify that you can see CN-MGMT, CN-NGFW and the PAN-CNI on the Kubernetes cluster.kubectl -n kube-system get podspan-cni-5fhbg 1/1 Running 0 27hpan-cni-9j4rs 1/1 Running 0 27hpan-cni-ddwb4 1/1 Running 0 27hpan-cni-fwfrk 1/1 Running 0 27hpan-cni-h57lm 1/1 Running 0 27hpan-cni-j62rk 1/1 Running 0 27hpan-cni-lmxdz 1/1 Running 0 27hpan-mgmt-sts-0 1/1 Running 0 27hpan-mgmt-sts-1 1/1 Running 0 27hpan-ngfw-ds-8g5xb 1/1 Running 0 27hpan-ngfw-ds-qsrm6 1/1 Running 0 27hpan-ngfw-ds-vqk7z 1/1 Running 0 27hpan-ngfw-ds-zncqg 1/1 Running 0 27hAnnotate the application yaml or namespace so that the traffic from their new pods is redirected to the firewall.You need to add the following annotation to redirect traffic to the CN-NGFW for inspection:

For example, for all new pods in the “default” namespace:annotations: paloaltonetworks.com/firewall: pan-fwkubectl annotate namespace default paloaltonetworks.com/firewall=pan-fwOn some platforms, the application pods can start when the pan-cni is not active in the CNI plugin chain. To avoid such scenarios, you must specify the volumes as shown here in the application pod YAML.volumes: - name: pan-cni-ready hostPath: path: /var/log/pan-appinfo/pan-cni-ready type: DirectoryDeploy your application in the cluster.